Advanced Natural Language Understanding (NLU)

for Intelligent Conversational AI

Oodles builds enterprise-grade Natural Language Understanding (NLU) systems that transform raw text into structured meaning using Python-based NLP pipelines, transformer models, and scalable API architectures. Our NLU solutions power chatbots, voice assistants, and virtual agents with deep intent understanding, entity extraction, sentiment detection, and contextual awareness.

What is Natural Language Understanding (NLU)?

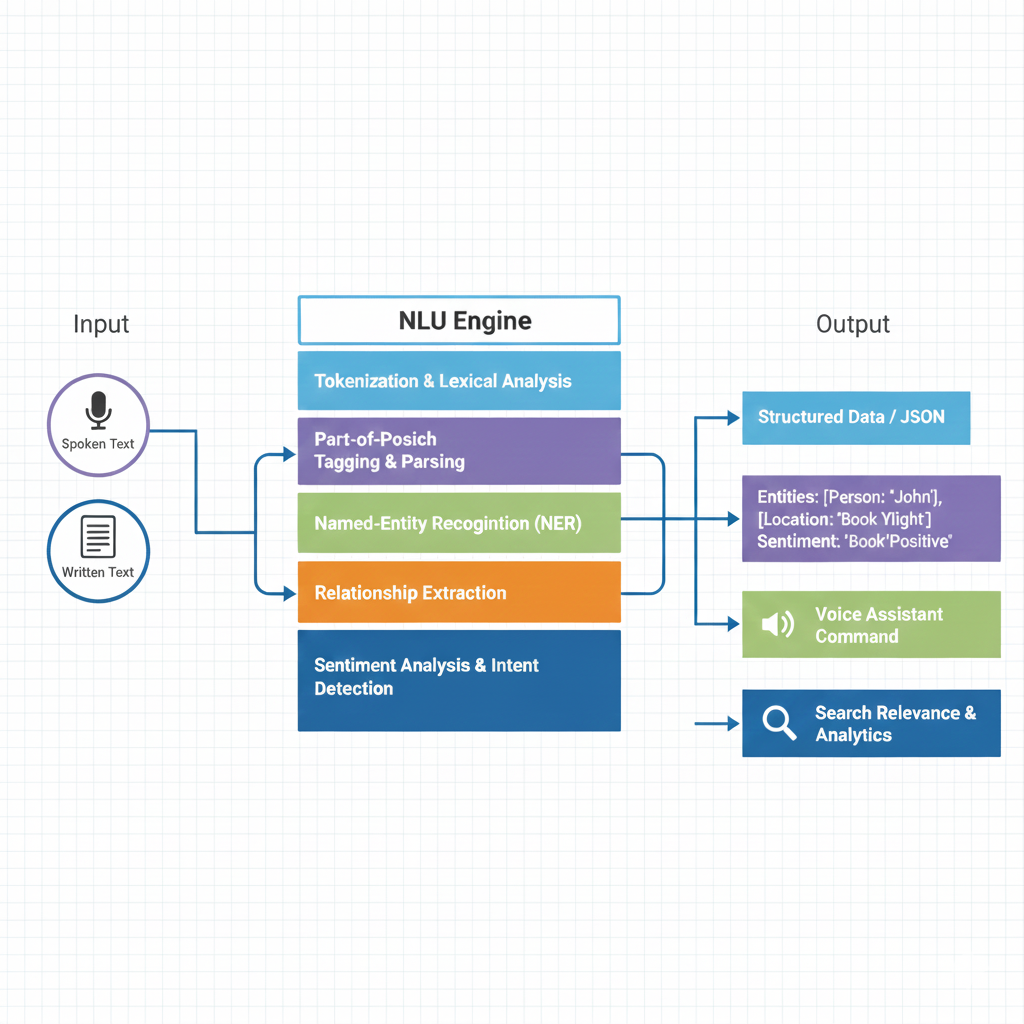

Natural Language Understanding (NLU) is a branch of Natural Language Processing (NLP) that enables machines to accurately interpret user language by converting unstructured text or speech into structured data that systems can act upon.

- Intent Classification – Identify the user’s goal using ML and transformer models

- Named Entity Recognition (NER) – Extract domain-specific entities such as dates, locations, IDs

- Sentiment & Emotion Analysis – Detect tone using deep learning classifiers

- Context Management – Track conversation state across multiple turns

- Slot Filling & Dialogue State Tracking – Capture missing or required information

Core NLU Capabilities We Deliver

Intent Recognition

High-accuracy intent classification using transformer-based models and supervised learning pipelines.

Named Entity Recognition

Extract structured entities using spaCy, CRF models, and contextual embeddings.

Sentiment & Emotion Analysis

Classify sentiment and emotional tone using deep learning classifiers.

Dialogue State Tracking

Maintain conversation context using memory-based and transformer-driven state models.

Why Enterprises Choose Our NLU Services

Domain-Trained NLU Models

Custom NLU models fine-tuned for banking, healthcare, retail, logistics, and enterprise workflows.

Multilingual Language Understanding

NLU pipelines supporting 30+ languages using multilingual transformer architectures.

Hybrid NLU Architecture

Combining rule-based logic, classical NLP, and deep learning for maximum precision.

Continuous Model Improvement

Human-in-the-loop training, data annotation, and active learning pipelines.

Secure & Flexible Deployment

Deploy NLU services using FastAPI on cloud, on-premise, or hybrid infrastructure.

Platform Integration

Seamless integration with Dialogflow, Rasa, Amazon Lex, CRMs, and enterprise systems.

NLU-Powered Enterprise Solutions

Oodles delivers scalable Natural Language Understanding solutions that convert human language into actionable intelligence across enterprise use cases.

Enterprise Chatbots & Voice Assistants

Enable intelligent support for customer service, sales, HR, and internal operations.

Sentiment Analysis Engines

Analyze customer emotions in real-time across email, chat, surveys, and social media.

Document Intelligence & Information Extraction

Extract structured data from contracts, invoices, forms, and enterprise documents.

Multilingual Virtual Agents

Communicate with customers in 100+ languages with native-level accuracy.

Call Center Automation

Transcribe → Analyze → Route calls in real-time with NLU-driven intelligence.

Custom NLU Model Training

Fine-tune BERT, RoBERTa, XLM-R, GPT-based encoders using your proprietary datasets.

FAQs (Frequently Asked Questions)

NLU focuses on comprehension—interpreting intent, entities, and meaning from text. NLP is broader (generation, translation, summarization). NLU powers intent classification for chatbots, slot filling for conversational AI, and semantic search.

We use multilingual models (XLM-R, mBERT) and fine-tune on your domain data. We support code-switching and low-resource languages. We also build language-agnostic intent schemas so one model can serve multiple languages.

Yes. We integrate NLU with ASR output for voice assistants. We use dialogue state tracking and context windows so the model understands multi-turn conversations. We handle corrections, clarifications, and follow-up questions.

With sufficient domain data, we typically achieve 90%+ intent accuracy. We use active learning to prioritize labeling, and we add confidence thresholds and fallbacks. We also provide ongoing evaluation and retraining as data shifts.

Yes. We build custom NER for product names, dates, locations, and domain-specific slots. We combine rule-based patterns with neural models. We also handle composite entities and normalization (e.g., "next Tuesday" → ISO date).

We deploy via REST APIs, serverless functions, or embedded in chatbot platforms. We optimize for latency (ONNX, TensorRT) and scale with Kubernetes. We add monitoring, A/B testing, and versioning for safe updates.

A typical project takes 4–8 weeks: data collection and annotation, model training and evaluation, integration with your stack, and deployment. Complex multi-intent or multilingual projects may take longer. We provide incremental milestones and demos.