Transform Visual Content with Diffusion Model Applications

Oodles builds production-ready diffusion model applications for image generation, creative automation, and visual content pipelines. Our solutions are developed using Python, PyTorch, and Hugging Face Diffusers, and leverage Stable Diffusion, SDXL, DALL·E-style architectures, ControlNet, and LoRA fine-tuning to enable text-to-image generation, image editing, style transfer, and brand-consistent visual asset creation at enterprise scale.

What is Diffusion Model Application?

Diffusion Model Applications are end-to-end AI systems that use denoising diffusion probabilistic models (DDPMs) and latent diffusion architectures to generate, edit, and transform images from text prompts or reference inputs. These applications are typically built using Python, PyTorch, CUDA-accelerated GPUs, and diffusion libraries such as Hugging Face Diffusers, with supporting components like CLIP text encoders.

Oodles delivers diffusion model applications for automated image generation, creative workflow automation, brand-aligned content production, and custom model training, fully integrated into enterprise platforms and creative toolchains.

Why Choose Diffusion Model Applications for Visual Content

Deploy state-of-the-art diffusion models built on Python + PyTorch ecosystems to automate creative workflows, generate high-quality visuals, and maintain strict brand consistency across large-scale image production pipelines.

High-Quality Image Generation

Generate photorealistic and artistic visuals using Stable Diffusion, SDXL, and custom PyTorch-based diffusion models.

Creative Control & Customization

Achieve precision with ControlNet, LoRA fine-tuning, and CLIP-based conditioning.

Multiple Model Support

Support for Stable Diffusion variants, custom latent diffusion models, and enterprise-trained architectures.

Production-Ready Deployment

Scalable APIs, batch inference pipelines, and GPU-optimized deployments using Docker, ONNX, and cloud GPU instances.

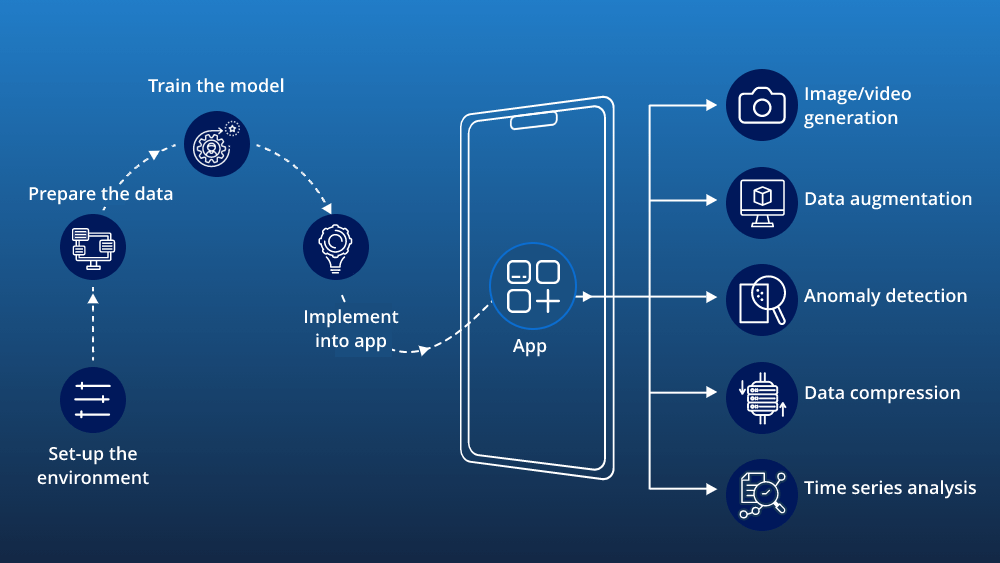

Our Diffusion Model Application Development Process

A structured, production-grade approach used by Oodles to deliver scalable diffusion model applications.

1

Requirements & Use Case Analysis: Define visual objectives, brand constraints, dataset requirements, and infrastructure needs.

2

Model Selection & Architecture: Select Stable Diffusion / SDXL / custom PyTorch diffusion models, integrate ControlNet, LoRA, and API layers.

3

Training & Fine-Tuning: Perform LoRA fine-tuning, dataset curation, parameter optimization, and quality benchmarking using PyTorch + CUDA.

4

Workflow Integration: Build REST/GraphQL APIs, integrate with CMS or creative tools, and implement batch generation pipelines.

5

Deployment & Monitoring: Deploy on AWS, Azure, or on-prem GPU infrastructure, implement monitoring, logging, and continuous model optimization.

FAQs (Frequently Asked Questions)

Image synthesis, text-to-image, inpainting, super-resolution, style transfer, and creative content generation across design and media.

Design tools, marketing assets, gaming, film, healthcare imaging, and product visualization leverage diffusion-based generation.

Text prompts condition the denoising process to generate images aligned with descriptions—powering DALL·E, Stable Diffusion, and similar tools.

Yes—diffusion models excel at inpainting, outpainting, and editing regions of images while preserving context.

fal.ai, Replicate, OpenAI DALL·E, Stability AI, and custom deployments for production integration.

Cloud APIs, on-premise GPU deployment, or hybrid setups with optimization for latency and cost.

Custom pipelines, fine-tuning, API integration, UI/UX for generation, and deployment for your use case.